As viral misinformation continues to reach millions on social media, major platforms have turned to fact-checkers’ as part of the solution.

“The role of fact-checkers has really evolved from urban myth debunkers,” said Kalev Leetaru, a senior fellow at George Washington University. “One thing that inspired us was the way that Silicon Valley has centralized around fact-checkers as third-party arbiters of truth.”

Leetaru, who recently launched RealClearPolitics’ Fact Check Review, was referring to Facebook’s ongoing partnership with fact-checking organizations, which launched in December 2016 in an effort to cut down on the amount of misinformation.

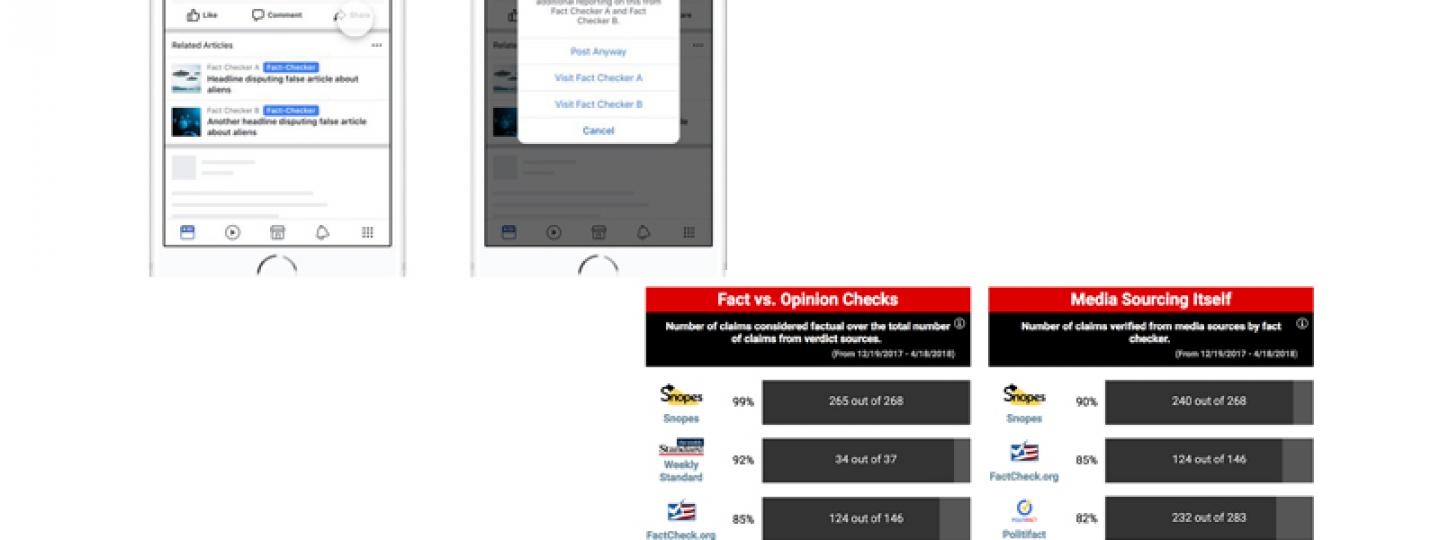

Under that program — which is now active in 10 countries around the world — fact-checkers can review viral posts and debunk them. Facebook then limits their reach in News Feed. (Disclosure: Being a signatory of the International Fact-Checking Network’s code of principles is a necessary condition for joining the project.)

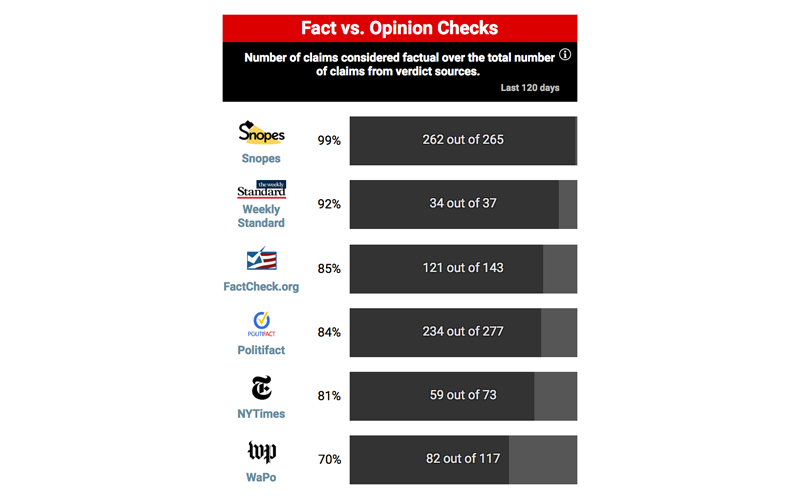

Fact Check Review analyzes six American fact-checking initiatives: PolitiFact (a Poynter-owned project), Snopes, The Weekly Standard’s Fact Check, The Washington Post Fact Checker, Factcheck.org and The New York Times. All except The Times and The Post contribute to Facebook’s fact-checking program.

The project only reviews fact checks “bearing on civic and public concern,” defined as “any topic that relates to the political or social environment.” This excludes “run-of-the-mill” urban legends and other apolitical types of fake news.

“Our project strictly involves codifying fact checks, rather than evaluating them,” Leetaru said. “In this regard, our team's role is limited to extracting and compiling the details of each fact check as it stands. We're not going back and evaluating sourcing or methodology or auditing whether a given conclusion was actually supported by the stated evidence.”

In explaining the need for a systemic analysis of fact-checking, Leetaru cited a notable hiccup from March: Snopes’ debunk of a satirical story about CNN and a washing machine. That article — which resulted in The Babylon Bee’s story being lowered in News Feed and a warning to page administrators — caught flack for labeling what some saw as an obvious piece of satire as false. Facebook later removed the flag.

“That example really demonstrated the phenomenal power that now resides with fact checks — a single decision of true or false is right on the edge,” Leetaru said. “(This project) really gets to that question of once the fact-checkers are being put into this central role, what does it actually look like?”

It’s a valid inquiry — and Leetaru isn’t alone in pursuing it.

In late March, the Media Research Center, a conservative watchdog organization, launched a project called Fact-Checking the Fact-Checkers. That initiative is under the organization’s NewsBusters blog, whose stated goal is to expose and combat “liberal media bias.”

“MRC routinely finds instances when fact-checkers bend the truth or disproportionately target conservatives,” MRC president Brent Bozell said in a statement. “We are assigning our own rating to their judgments and will expose the worst offenders.”

So far, the project — whose landing page is titled “Don't Believe the Liberal ‘Fact-Checkers’!” — has rated fact checks that cast Democrats in a negative light “The Real Deal” while assigning “Fully Fake” to those that corroborate their statements. NewsBusters more broadly has been criticized in the past for taking quotes out of context and making false claims about political candidates.

While it’s couched in the idea of analyzing fact-checkers, NewsBusters’ project is categorically different from what Fact Check Review is doing — it’s explicitly partisan. Leetaru said he has no such political motivation, pointing to the project’s public methodology as an indication of its nonpartisanship. Here’s an excerpt:

“… we break each fact check into the discrete claims it evaluates; summarize each claim using the fact checker’s own words; separate the list of sources to associate each source with the specific claim(s) it was used to confirm or refute; assess a claim as ‘fact’ or ‘opinion’; and record whether the fact checker specifically notes that their determination was based on a lack of evidence or belief that the claim is misleading and classify each source into a type taxonomy.”

“We’re not trying to fact-check the fact-checkers. We’re not going in and saying, ‘This is a good fact check and this is a bad fact check,” Leetaru said. “Our goal of this project is strictly to codify everything as it stands for a reader evaluation.”

The project began last summer, when a team of interns started weeding through and coding months of fact checks from different organizations. During that process, several questions stood out to him:

- Sourcing. What is truth to a fact-checker?

- Claim selection. Which ones do they choose and why?

- Fact vs. opinion. When you’re evaluating a statement, where do you draw the line?

To answer them, Fact Check Review employs a multi-pronged approach, three of which are visible on the project’s landing page.

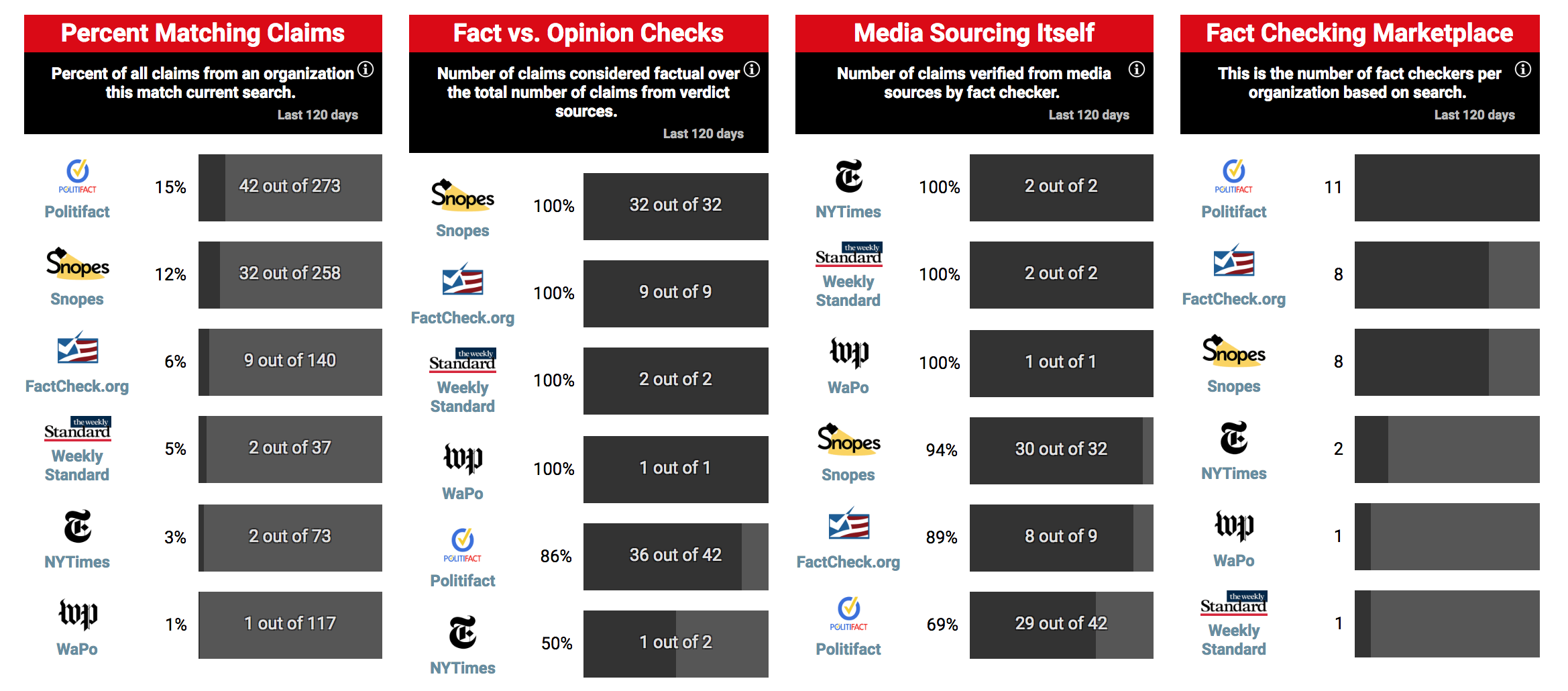

First, Fact Check Review examines whether a fact check is a “fact vs. opinion” check. Leetaru said that his team examines whether or not a fact-check addresses a claim that can be confirmed by statistics or another form of explicit proof, or if it rates an opinion. The latter would be a weighty charge as, per the IFCN’s code of principles, fact-checkers aren’t supposed to fact-check opinions.

“The idea of this field is to see whether fact-checkers are focusing on factual claims that can be definitively proven or disproven or on opinions for which there is not a definitive ‘right’ or ‘wrong” answer,” he said. “Thus, ‘Obama was the 25th president’ would be listed as Fact, since it can be definitively proven or disproven, even though it is a false statement.”

According to the review as of publication, Snopes has the highest ratio of “fact” checks (99 percent), while The Fact Checker has the lowest (70 percent). As one example of an “opinion” check, the review cites a Fact Checker story from earlier this month that picks apart 24 quotes from two Trump speeches — providing context for most but resulting in only three actual fact check ratings. Still, Fact Check Review codified four unrated Trump claims and one claim rated "Not the whole story" from the story as “opinion” checks.

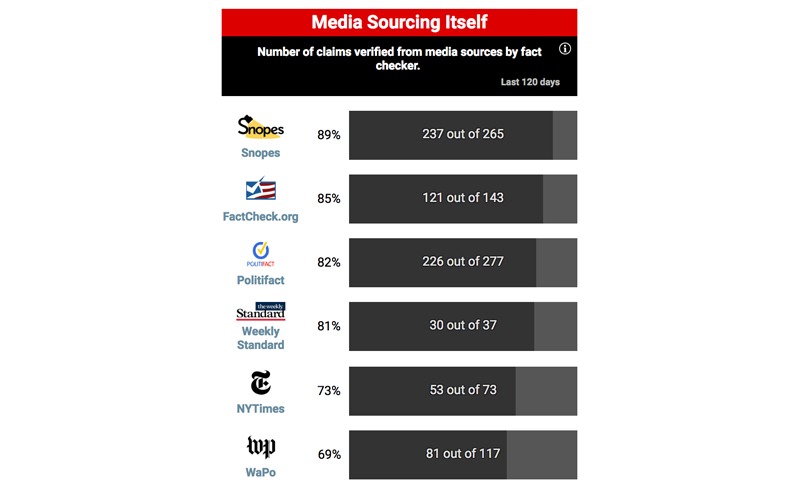

Second, the project looks at the extent to which the fact-checkers cite other media stories in their work. According to the review as of publication, Snopes had the highest proportion of fact checks citing other news articles (89 percent), while The Fact Checker had the lowest (69 percent).

Third, Fact Check Review keeps tabs on how many fact-checkers each organization employs.

Additionally, users can search Fact Check Review for all the articles related to a certain topic. For example, a search for Donald Trump surfaces new data related to the previous three categories, in addition to the proportion of fact checks that include that keyword.

When asked about Fact Check Review, PolitiFact Editor Angie Holan said she mostly takes issue with the project’s categorization of media sourcing.

“We put our own fact checks in the source list when it’s related to claims that we’ve fact-checked before,” she said. “We do cite primary sources as well — we wouldn’t have a fact check that’s just sourced to us … we also put stuff in there that’s secondary or interesting.”

In an email to Poynter, Factcheck.org editor Eugene Kiely agreed that the measure is flawed since it doesn’t make a distinction between fact checks that simply include links to other media outlets and those that rely on them to verify claims. Such was the case when Fact Check Review marked a Factcheck.org debunk about Facebook giving the Koch brothers user data as relying on media sourcing, when in fact it used a combination of media and primary sources, he said.

“On the day it was introduced, RCP says 85 percent of our claims since Dec. 10 were ‘verified from media sources’ — 121 of 142 fact checks,” he said. “That’s simply not an accurate measure of how we verify facts.”

In a letter sent to Leetaru on April 9, The Fact Checker’s Sal Rizzo — who authored the story about Trump's two speeches — also pushed back against the project.

“You argue that one of our fact checks about President Trump ‘would seem to reinforce concerns about subjectivity,’” Rizzo wrote. “But your summary of our fact check plainly misrepresented what we wrote.”

In his article about Fact Check Review’s launch, Leetaru wrote that the fact check, which gave a Trump address on immigration and sanctuary cities Four Pinocchios, was a case of a fact-checker rating objectively true statements false because they lacked context. Rizzo said that wasn’t the case, and that the outlet’s rating was based on five statements, not two, as Leetaru had originally said.

In a response article published on RealClearPolitics on Monday, Leetaru assessed the letter and defended his original thought process.

“A casual reader looking for a quick answer as to whether one or more of the president’s statements was true would likely assume the Four Pinocchios rating applied to all five claims, especially in the absence of an individual rating beside each claim,” he wrote.

Poynter reached out to The Weekly Standard's Holmes Lybrand for his thoughts on Fact Check Review, but he declined to comment.

Still, despite the criticism, Fact Check Review is different from projects like NewsBusters’, which Holan said are bent on publishing closed-minded critiques of fact-checking as a medium. She said that no online publisher is exempt from criticism — it comes with the territory.

“I have no problem with people looking at our fact checks and doing analysis on them,” she said. “We’ve been subject to reviews for pretty much the entire time we’ve been around. I think it’s healthy for the media to police each other.”

And sometimes that policing is internal.

In March, PolitiFact received the results of a months-long linguistic analysis of its fact checks, conducted by researchers at Carnegie Mellon University and the University of Washington. The report, funded by a grant the Knight Foundation awarded PolitiFact in June, used natural language processing to analyze about 10,000 articles between 2007 and 2018 to determine whether or not there was a Republican or Democratic bias in the wording.

PolitiFact executive director Aaron Sharockman told Poynter in a message that the outlet will publish the results in the coming weeks.

Holan said the effort is indicative of PolitiFact’s willingness to accept analyses of its fact checks.

“I’m very grateful to be able to work in journalism and do the kind of work we do at PolitiFact. I think my colleagues feel the same way — we want to get better at fact checking,” Holan said. “From that point of view, we welcome constructive criticism.”