This is the second article in a three-part series on the people behind the misinformation phenomenon. Part one featured students working on misinformation-related projects and part three will feature notorious fake news writers.

LYON, France — Amy Zhang was thousands of miles away when her work was presented at The Web Conference. She’s a computer science researcher at the Massachusetts Institute of Technology, but she still hasn’t found a way to be in two places at once.

“I heard it went really well,” she told Poynter. “The two projects aren’t totally unrelated. It’s just unfortunate that they were on the same day.”

While co-author An Xiao Mina, director of product at the nonprofit technology company Meedan, was presenting in Lyon in late April, Zhang — a former software engineer — was helping a master’s student present a project on online harassment at another conference in Montreal. Their paper looks at the different ways that online news articles signal credibility to readers.

At The Web Conference, Mina said they found that things like aggressive ad placement reduced participants’ perceptions of credibility, while the number of ads did not. At the same time, clickbait titles and an emotionally charged tone negatively affected articles’ credibility.

Check out this interesting, *10-author* paper on what signals give news articles credibility, which was published in partnership with @snopes, @AP and others. #TheWebConf https://t.co/1rD07WhkBe

— Daniel Funke (@dpfunke) April 25, 2018

Those findings come at a time of media hand-wringing over how news consumers are being gamed by online misinformation.

Zhang and Mina co-authored their paper with 12 other researchers, technologists and fact-checkers who are part of the Credibility Coalition, a collaborative effort founded by Meedan and Hacks/Hackers to come up with solutions to declining trust in news. Member organizations include Snopes, the Associated Press and Climate Feedback.

That collaboration — coupled with the fact that this was the first year that the 24-year-old Web Conference had a track dedicated to fact-checking and misinformation — speaks volumes about the demand for misinformation research amid a growing interest in fake news over the past few years. Zhang said that expansion is what first piqued her interest in researching the phenomenon, which she’s done since starting MIT’s doctoral program in 2014.

“I’m a computer scientist,” she said. “It was kind of natural for misinformation to appear on my radar. The focus of my work is more on tool-building — what kind of tools could we provide everyday users to better manage their information and the content that they see.”

Over the past year, interest in misinformation research has ballooned. Fake news studies regularly attract high-profile — albeit frequently flawed — news coverage. Different organizations are cataloging the latest research, including the International Fact-Checking Network.

But misinformation research isn’t confined to labs, classrooms and online portals — technology companies are increasingly drawing upon that work to inform how they address fake news on their platforms.

On Wednesday, Google announced its involvement with Datacommons.org, a new project aimed at sharing platform data with researchers and journalists. Facebook announced a similar program last month to help researchers measure the impact of social media on elections.

“I think papers in themselves are not that useful except for other academics,” Zhang said. “Researchers can provide a lot in terms of policy recommendations and potentially helping governments and tech companies understand the problem better.”

From professors to doctoral researchers, here are some of the people who are working to advance our collective understanding of misinformation. Know someone you think we should know about? Email us at factchecknet@poynter.org.

Leticia Bode, Georgetown University

A few years ago, when Leticia Bode’s research focused mostly on political information on social media, the top question people asked her was always about the fake stuff.

“Exposure to political information may be helpful for motivating turnout or other types of participation, but if it misinforms, is that a worthwhile tradeoff?” she told Poynter in an email. “I thought I needed to start investigating misinformation on social media in order to answer that question, but then I became more intrigued with correction of misinformation more specifically.”

So when she started researching misinformation, it seemed like a natural extension of her work as an assistant professor in the Communication, Culture and Technology program at Georgetown University. Now, she’s the author of several studies on the phenomenon — specifically about the effect of corrections on social media.

One of her studies was even used by Facebook to further develop its anti-misinformation efforts.

“Academic research isn't always immediately or effectively used by those that it could help, so that was a very proud achievement for us,” she said.

That study, titled “In Related News, That Was Wrong: The Correction of Misinformation Through Related Stories Functionality in Social Media” and co-authored by Emily K. Vraga, was given as a basis for a December change in the way that Facebook deals with fake news. Instead of labeling stories debunked by fact-checkers as false, the platform now appends related fact checks.

It was a prime example of how misinformation research has real-life policy implications, Bode said. Going forward, she’d like to see more work done on which types of people are most susceptible to misinformation, as well as which kinds of messages are most effective in changing their views.

“Research can help us understand the mechanisms behind patterns we see, and understanding those mechanisms is key for being able to alter behaviors or outcomes,” she said. “More information is always a good thing!”

Matthias Nießner, Technical University of Munich

Matthias Nießner was surprised by the reaction to his paper.

“It was widely seen as a threatening way for fake news to spread,” he told Poynter. “It was very surprising for us, actually, because the movie industry has been doing this for years — the only difference is that it’s gotten a little bit easier.”

The 2016 project, called “Face2Face,” presents an approach to reenact YouTube videos in real time using machine learning and facial recognition technology. Put simply: It lets people with a webcam alter a YouTube video of a someone speaking to make it look like they’re saying something else.

That puts it in the bucket of “deepfake” video technology, or the use of artificial intelligence to substantially modify a video. That phenomenon has been the subject of many a doomsday story over the past few months, but Nießner, a professor in the Visual Computing Lab at the Technical University of Munich, said not only is the technology not new — it’s still rudimentary.

“It’s going to stay like that for a while,” said Nießner, who got his start researching graphics. “There’s a lot of interest from a research perspective (in) how far you can push manipulation, but practically speaking, it’s going to take a while before you have really bulletproof fakes.”

“For someone who doesn’t know how this technology works, it’s very hard.”

Still, detecting deepfake videos online remains a challenge for fact-checkers. With that in mind, Nießner's team is working on methods like FaceForensics, a system that pulls from a dataset of about half a million edited images from more than 1,000 videos to detect patterns in manipulated videos.

Aside from developing ways to weed out deepfakes online, Nießner said he hopes his team’s work will start an open dialogue with tech companies and news consumers about media literacy.

“One reason why we did all this stuff is we really wanted to raise awareness,” he said. “Ultimately we have to educate people in a way to make them understand what is possible. The open research community has to do that.”

Brendan Nyhan, Dartmouth College

If you’ve read a study about fake news, you’ve probably read Brendan Nyhan.

The Dartmouth College professor and occasional New York Times contributor is prolific, having authored several widely cited studies on misinformation. Although its findings have since been disputed, including by Nyhan himself, his research on the so-called “backfire effect” — which posited that people are more likely to believe misinformation that confirms their views when presented with a corresponding correction — has frequently been the basis for high-profile stories about fact-checking.

But his work didn’t always get that much attention.

“When Jason (Reifler) and I started doing research in this field, misinformation wasn’t really a topic,” he told Poynter. “When you’re trying to do new research it can be challenging to publish because a set of standards haven’t emerged on how to approach research questions or how to define key terms. In the absence of that shared framework, it’s hard to progress.”

After graduating from college in 2000, Nyhan started the blog Spinsanity — a response to what he saw as a lack of factual debate during the 2000 U.S. presidential election. This fact-checking precursor landed syndication deals with publishers like Salon and The Philadelphia Enquirer before shuttering in 2005 when Nyhan started graduate school.

He wrote his dissertation about political scandal, which marred Bill Clinton’s presidency while he was growing up. But after graduating, his work bent more and more toward misinformation.

“I think I’m the most proud of the way that I’ve helped bring misinformation research forward in social science and reach a larger audience,” he said. “Scholars for too long have neglected factual beliefs and studying public opinion and political psychology … it’s important for us to play catch up, and I hope we have.”

So what questions still keep Nyhan up at night?

“We have the most to learn about the role of elites in creating and promoting misperceptions and misinformation,” he said. “I don’t think we understand the strategy of misinformation very well or how beliefs can contribute to factual beliefs becoming polarized.”

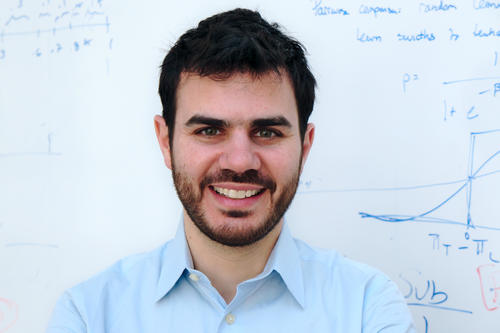

David Rand, Yale University

David Rand wasn’t into misinformation before it was cool.

“Like a lot of people, it was the 2016 election that brought these issues into focus for me as an exciting and important area of study,” he told Poynter in an email.

But since then, the Yale University associate psychology professor has been a powerhouse, conducting research that’s relevant to tech platforms’ ongoing battle against misinformation. His work has been critical of Facebook, including studies that call into question its fact-checking efforts and calling for more open sharing of data.

To Rand, the importance of research lies in its ability to affect wide-reaching policies.

“Firstly, basic science that sheds light on what factors influence people’s belief in, and desire to share, different stories is really important in terms of guiding the development of effective interventions,” he said. “And secondly, academic researchers can do first-round evaluations of potential interventions to help hone in on what seems most promising.”

In one major study from September, Rand and his research partner Gordon Pennycook, a postdoctoral fellow at Yale, found that tagging fake news stories on social platforms like Facebook decreases their believability while lending more credibility to untagged false stories. The work shed light on a program that has become the tech company’s most visible effort to tackle fake news, and Facebook later abandoned the practice in favor of simply appending related fact checks.

“I’ve felt particularly good about our ability to run studies evaluating interventions that are currently in use … and to then quickly get our results out into the public sphere as working papers to help inform public debate and policy-making,” he said.

Still, Rand said that what researchers still don’t know about misinformation are, in many ways, the most basic questions: What impact does exposure to misinformation have on people’s attitudes toward politics and trust in media?

“And how does this vary across different kinds of misinformation?” he said. “Second, what are effective interventions to reduce belief in misinformation, and — probably more importantly — reduce sharing of misinformation?”

Briony Swire-Thompson, Northeastern University

Briony Swire-Thompson’s research can be divided into two buckets.

“Why people forget corrections, but also people’s ideological beliefs and why that perhaps holds people back,” she told Poynter.

Ironically, she still doesn’t know where those two concepts meet. And that’s what she’s interested in learning more about.

Now a postdoctoral researcher at Northeastern University's Network Science Institute, Swire-Thompson has done work for the Massachusetts Institute of Technology on how fact-checking changes people’s minds about certain issues — but not their votes. She’s also researched how one’s familiarity with misinformation affects their receptivity to corrections.

In that way, she’s lucky.

“I think a lot of people in cognition and memory studies are confined to theoretical grounds, but misinformation, I think, is just so applicable,” she said.

Swire-Thompson first became interested in misinformation in 2009, when her honours degree supervisors at the University of Western Australia (UWA), Ullrich K. H. Ecker and Stephan Lewandowsky, started researching the phenomenon. She loved it immediately but took a two-year break in Ecuador before starting her doctorate because she knew she’d have to start working afterward.

“It certainly helped make me certain that misinformation research was really important,” she said, “I’d see and witness how hard it is to correct things like mental health issues being contagious. I worked in a hospital there, and I think no matter where you go — if you already piqued your interest in misinformation — you kind of see it everywhere.”

So when she came back to academia, she picked up right where she left off.

While writing her Ph.D. dissertation at UWA, she said people were confused as to why she was researching belief change over time for a psychological science degree. But by the time she submitted it, they understood why the topic — and what researchers don’t know about it — was important.

“Through time, the application (of misinformation research) has become more and more glaringly obvious,” she said. “This is such a new area of research and we still don’t have a good handle on underlying mechanisms.”