Carl Woog sat on stage in front of about 200 fact-checkers. And for about half an hour, he took questions and tried to assuage their concerns about the viral misinformation that has become endemic on WhatsApp.

“We want to learn, right?” the company’s policy communications lead told Poynter before the panel, which I moderated. “We want to figure out how we can be more helpful, but I think fact-checking is going to be essential.”

In many ways, Woog’s attendance at the International Fact-Checking Network’s Global Fact-Checking Summit in mid-June was a notable move from WhatsApp, the Facebook-owned private messaging platform that has been under growing media scrutiny for its misinformation problem in recent weeks.

In the spring, WhatsApp became a top source of political misinformation ahead of local elections in India, where the platform has more than 200 million users (its largest market). The fakery went from bad to worse with rumors about child abductions and alleged organ harvesters, which have reportedly contributed to the death of about a dozen people over the past several weeks.

“In India, for a lot of people (WhatsApp) is their first entry point into the internet itself,” Govindraj Ethiraj, founder of Indian fact-checking project Boom Live, told Poynter. “I think there’s a certain degree of belief among common people about information and messages that come on these platforms … people fall for these things all the time.”

“It’s like email — people are sharing information at very high speeds through chains.”

While the extent to which hoaxes themselves are directly causing violence in India is still unclear, the situation is just one example of how WhatsApp has been weaponized to spread misinformation in recent months.

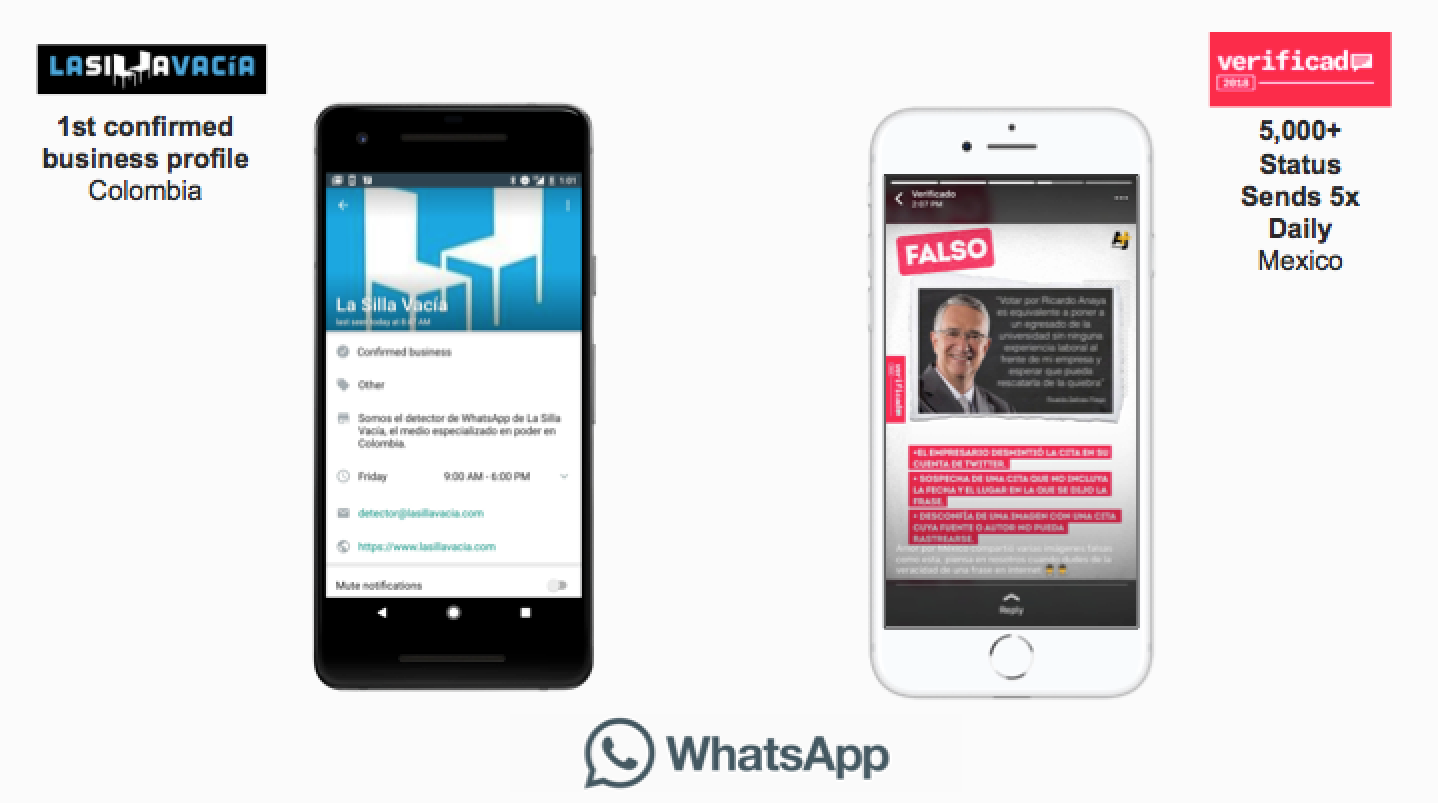

Amid an outbreak of yellow fever in Brazil, where 120 million of the country’s 200 million citizens use WhatsApp, people are widely sharing anti-vaccine rumors. In Mexico, the platform became a centerpiece of the collaborative fact-checking project Verificado 2018, which created an entire team dedicated solely to debunking hoaxes on WhatsApp.

“During the election campaign, a lot of rumors and fake news and memes and videos circulated through the app,” said Alba Mora, executive producer of AJ+ Español and the leader of Verificado’s WhatsApp team. “So it made a lot of sense to fight the fake news in the same platform so that the user doesn’t need to leave the app to understand if something is real or not.”

Verificado’s ad hoc approach — creating an institutional WhatsApp account to receive and respond to messages about fake news, photos and memes — is a common tactic among fact-checkers, who struggle to address misinformation on the platform. All messages are automatically encrypted and groups are limited to 256 people, which means that no one — not even WhatsApp’s own employees — can see when and where fake news goes viral.

But now, WhatsApp is taking several baby steps to help fact-checkers and researchers fight misinformation on the app.

Most recently, the company announced last Tuesday that it will commission awards for researchers who are interested in investigating misinformation on WhatsApp. Social scientists could earn $50,000 for proposals related to “detection of problematic behavior within encrypted systems,” among other topics.

That update came amid a flurry of other statements included in a letter sent in response to a statement from the Indian government about WhatsApp-related violence (and sent to Poynter). The letter highlighted several things the platform is doing to try and limit the spread of misinformation, including product controls, proactive action against abuse, digital literacy and fact-checking.

At Global Fact, Woog told Poynter that among the most important things WhatsApp has been working on in recent months is developing working relationships with fact-checking projects like Boom, Verificado and Colombian outlet La Silla Vacía. WhatsApp has provided official support for the latter two, troubleshooting tech problems and teaching them how to make the most of its business app for Android.

Aside from recognizing verified businesses with a checkmark, the additional app — which launched in January — lets those accounts add in-depth descriptions with address and contact information, respond to anyone that messages them and access basic analytics like how many of its messages are sent, delivered and read.

And before the Mexican election earlier this month, Verificado broke the app.

“(WhatsApp) was happy that we broke the app in the sense that there’s a great potential or journalism in general, I think, and fact-checking specifically,” Mora said. “When you post a status only on WhatsApp, the app acts as if you send this media to all your WhatsApp contacts. So our app crashed all the time because it was just too many chats — it was just too much.”

With more than 8,000 contacts as of publication, Verificado had to buy extra memory for the phone it uses to maintain its WhatsApp account. Mora said her team gave the company feedback on how the project was going on a weekly basis, and WhatsApp would troubleshoot with them in return.

Woog said that kind of partnership is what WhatsApp hopes to maintain with more fact-checking projects in the future — particularly in India and Brazil. WhatsApp is an official tech partner of Comprova, a new collaborative verification project in the latter country.

“I think what we hope will be helpful is fact-checkers being aware of how to use our services and, as we grow our business tools, to make those available to fact-checkers to make their missions smoother,” he said.

During his panel, Woog shared some data — the average group size on WhatsApp is six people and about 90 percent of the messages sent on the platform are one-to-one. WhatsApp can see which accounts are sharing a lot of messages, but since the app is encrypted, that kind of broad information is essentially all the company can really call up about how its users interact, Woog said — and that complicates the potential for a more official, Facebook-style partnership in which fact-checkers could directly debunk false stories.

“I’m not sure that we necessarily have data that could be more helpful than the generic understanding of how the service is being used,” Woog said. “We don’t track who messages who, which people are messaging which people — and we do that for privacy.”

Woog also cited privacy as one of the reasons WhatsApp hasn’t gone forward with testing for a much-asked-for feature among fact-checkers: labeling who originally composed a message. But among the product changes that WhatsApp is considering include labeling messages when they’ve been forwarded — a feature it’s been beta testing in India. According to the letter, WhatsApp plans to launch that soon.

“The idea behind that is we give people insight when they receive a message that they knew their friend wasn’t the originator of — and that should give them pause before sending it on again,” Woog said. “It could be (a message) that is a rumor or hoax, and we want to help give people context and clues to thinking that it’s not wise to forward that on again.”

WhatsApp has also rolled out changes to groups in recent weeks, allowing administrators to control who gets to send messages within them and preventing people from adding others back to groups that they’d already left. But some of fact-checkers’ wants aren’t on the company’s to-do list yet.

At Global Fact, Karen Rebelo, deputy editor of Boom, asked Woog if WhatsApp was considering adding a reverse image search capability to the app. That way, users could check whether or not an image was fake or altered. Woog said that WhatsApp might consider it.

Although Mora said WhatsApp was supportive of Verificado throughout the election, she wishes they had created a feature that would have allowed them to have more than two sessions open at once. Currently, fact-checkers can only have two people manning WhatsApp at a time — one on a phone and one on a computer.

Then there are limitations in WhatsApp Business’ labeling feature, which allows users to organize contacts and chats.

“You can label conversations by colors, but we would need like 50 different labels and they have like five,” Mora said. “The scale that we were working on — it just was not friendly for us.”

Still, fact-checkers are generally positive about the steps that WhatsApp has taken to address misinformation on the platform over the past year.

“There seems to be an overall recognition of how this platform has evolved in India, and that they need to do something about it and start working on it,” Ethiraj said. “If I was discouraged in the past that they were ignorant, I do get a sense now that they are pretty reasonably clued in and working to fix whatever they can fix.”