When Facebook started letting users post text on top of colored backgrounds in 2016, it seemed like a fairly benign way to get people to share more personal thoughts on the platform.

“Adding spice to status updates could help Facebook boost ‘original sharing’ of unique personal content, as opposed to resharing of news articles and viral videos,” TechCrunch reported at the time.

But since then, like other formats on Facebook, the text post feature has been weaponized into an effective way to spread misinformation on the platform.

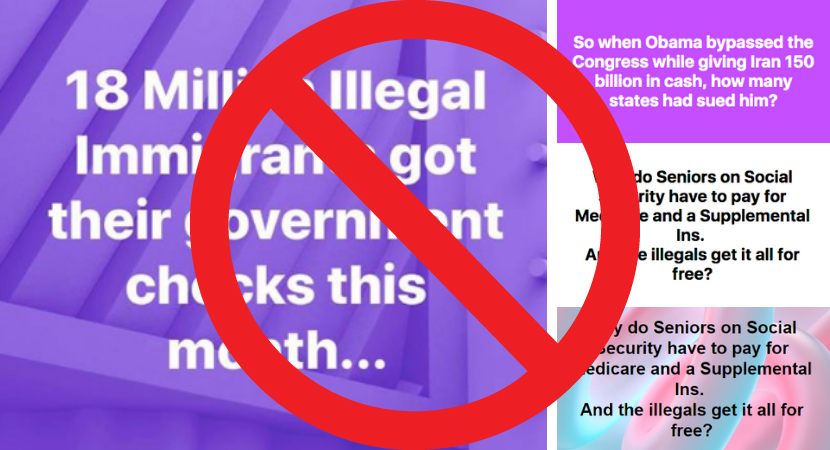

Over the past few weeks, some of the most viral hoaxes on Facebook have spread in the form of text posts. They make salacious political claims without linking to any website or attaching a photo or video. They often come from regular Facebook users instead of Pages or Groups.

And, according to data from BuzzSumo, an audience metrics tool, those kinds of hoaxes are getting more reach on Facebook than articles from fact-checkers that partner with Facebook to limit the reach of misinformation. (Disclosure: Being a signatory of the International Fact-Checking Network’s code of principles is a necessary condition for joining the project.)

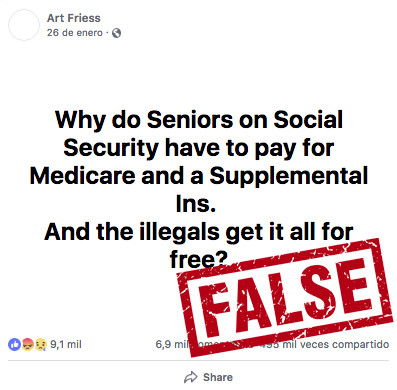

Last week, a hoax claiming American senior citizens have to pay for Medicare while undocumented immigrants don’t got more than 510,000 likes, shares and comments on Facebook. The post was just black text on a white background, but it still got hundreds of thousands of more engagements than a debunk from Factcheck.org.

Near the end of January, another text post got massive engagement on Facebook. That one also had to do with undocumented immigrants and got 13,000 more engagements than a fact check from PolitiFact. Earlier that month, another text post got 180,000 more engagements than another fact check from the Poynter-owned outlet.

And this week, that trend didn’t slow down.

One text post, posted at the end of January, repeated the Medicare hoax debunked by Factcheck.org on Feb. 21. It got more than 15,000 engagements — 10 times the reach of PolitiFact’s debunk. Another false text post published Feb. 19 about the Iran nuclear deal racked up five times more engagements than a corresponding Factcheck.org article.

It takes virtually no time for users to create text posts, yet they get massive engagement on Facebook. Why?

First, research shows that visual misinformation spreads further on social media than text-based posts. Photos regularly beat out fact checks on social media. So by adding a visual element, in this case, a colored background, users can attract more eyeballs (and shares) than a mere bogus claim in a text status.

Second, Facebook employs artificial intelligence to try and detect duplicate fakes on the platform. Once it finds a new hoax that’s already been debunked elsewhere by one of its fact-checking partners, it automatically downranks it. But that system could be hampered by the fact that there are no links or visually similar elements of text posts that can be used to identify when a false claim gets repeated by multiple users.

Finally, the reason false text posts get so much reach could have to do with the reason they were created in the first place: they facilitate more personal sharing. As WhatsApp’s misinformation problem illustrates, people are more likely to believe bogus claims if they’re shared by people they know and trust. Claims in text posts inherently come from users themselves, not other websites, so their friends might be more likely to share them.

Below is a chart with other top fact checks since last Tuesday in order of how many likes, comments and shares they got on Facebook, according to data from audience metrics tools BuzzSumo and CrowdTangle. None of them address spoken statements (like this one) because they aren’t tied to a specific URL, image or video that fact-checkers can flag.