News organizations were dubious when Facebook announced last week that it would highlight "trusted sources" of news based on community feedback. It was, after all, the Facebook community that propelled “fake news” stories and Russian-linked bots during the last election cycle.

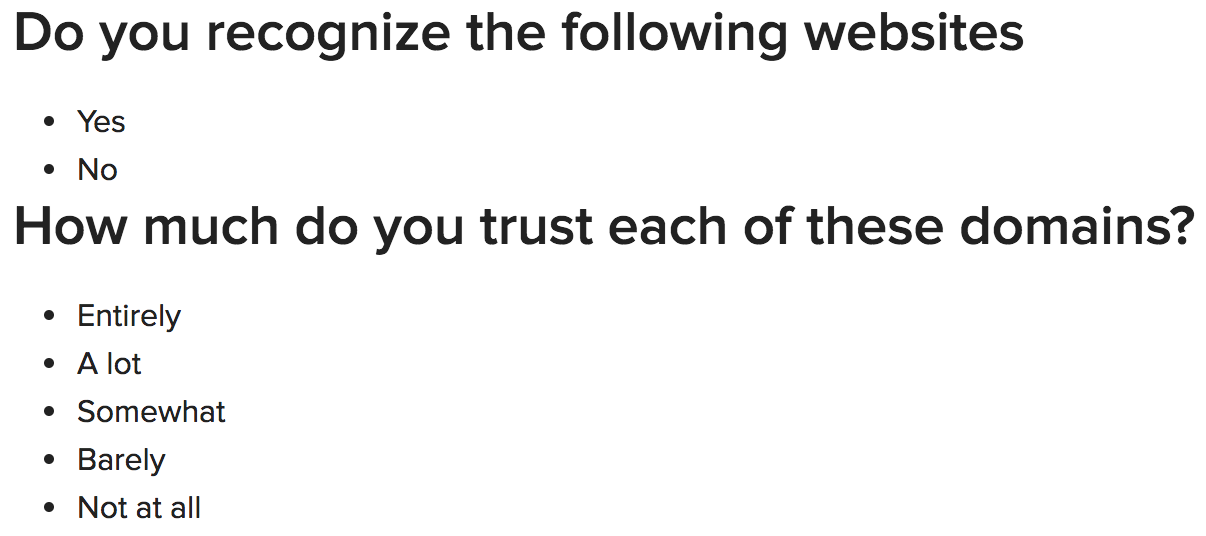

But journalists went apoplectic when the social media giant later confirmed the survey consisted of just two questions.

Here’s the survey (via Buzzfeed):

In a response to another user on Twitter, Facebook’s vice president of product management, Adam Mosseri, defended the survey.

“I understand that some people may balk at how simple a survey is, but complicated surveys can be confusing and bias signal, and meaningful patterns can emerge from broad surveys,” he said.

Though the survey has problems, Mosseri has a point. The format falls in line with how other researchers might attempt to judge trustworthiness. Steven S. Smith, a professor of political science and director of the Weidenbaum Center at Washington University in St. Louis, called it “not so bad.”

“If they have a larger pool and that pool is fairly comprehensive, and if a website is randomly selected for each respondent, they’re still going to get a very large sample of their users and that’s not a bad strategy. Many of this in the survey business do that all the time,” Smith said.

Long sets of questions earn fewer responses. Running shorter surveys across broader swaths of diverse people is a valid way to earn more responses. Assuming that users see a random selection of news organizations, it’s a fine way to do things, Smith said.

But even if the methods are sound, the survey still may not be. Its aim to “shift the balance of news you see towards sources that are determined to be trusted by the community” is, in Facebook parlance, complicated, because the news industry is so polarized.

“There are very few sites that have very large followings because there are so many sites. Everyone has a plurality, nobody has a majority. So who has the biggest plurality?” Smith said.

Consider Fox News. It’s the most-watched cable channel. But Facebook users are likely going to rank its trustworthiness in vastly different ways. Republican and conservative responses will skew far toward the high end of the scale and Democrat and liberal will skew far toward the other.

“How does that inform Facebook about placing Fox News items on their site? I don't know,” Smith said.

“The average there, the mean, probably is not very useful. [Fox News] might end up being very much in the middle of the road in terms of trustworthiness, but that’s very different from another organization where the vast majority of responses are in the middle.”

In other words, how does polling polarized audiences tell you about the real trustworthiness of news organizations?

“It may not,” Smith said.

But, echoing the ongoing conversation about Facebook as a publisher vs. Facebook as a platform, Smith said the biggest problem with the survey might be Facebook’s hands-off approach.

“Allowing the audience to determine credibility without exercising any judgment themselves is a questionable standard,” he said. As an example, he said a fact-checking organization like Politifact is “actually making a judgment about accuracy and trustworthiness of a source or a claim. What Facebook is trying to do is let someone else do that for them. That’s a problematic enterprise because of the biases that that audience has.” (Disclosure: PolitiFact is a project of the Poynter-owned Tampa Bay Times.)

Essentially, audiences are going to be familiar with news sources that they already use. If they’re not familiar with one, they won’t rate it. If they’re familiar with one and it doesn’t match with their beliefs, they’ll likely rate it as untrustworthy. “And so the selection of the source and their attitude toward it are all a product of their bias,” Smith said.

So, while the two-question survey format may be valid, the overall results likely won’t be.

“I think that their responses are going to represent audience bias more than any reasonable standard of trustworthiness,” Smith said.