Facts-don’t-matter headline writers take note: According to new research, fact-checking may be an effective medicine against misinformation. However, it doesn’t seem likely to move people at the ballot box.

A study published today by Royal Society Open Science concentrated specifically on statements — both factual and inaccurate — made by Donald Trump during the Republican primary campaign.

The study’s most helpful contribution to the discussion over the role of facts in political discourse is to create a distinction between the effect of corrective information on people’s beliefs versus its effect on their voting choices.

The basic conclusion of the study is that fact-checking changes people’s minds but not their votes.

The authors presented 2,023 participants on Amazon’s Mechanical Turk with four inaccurate statements and four factual statements (see full list here). Misinformation items included Trump’s claim that unemployment was as high as 42 percent and that vaccines cause autism.

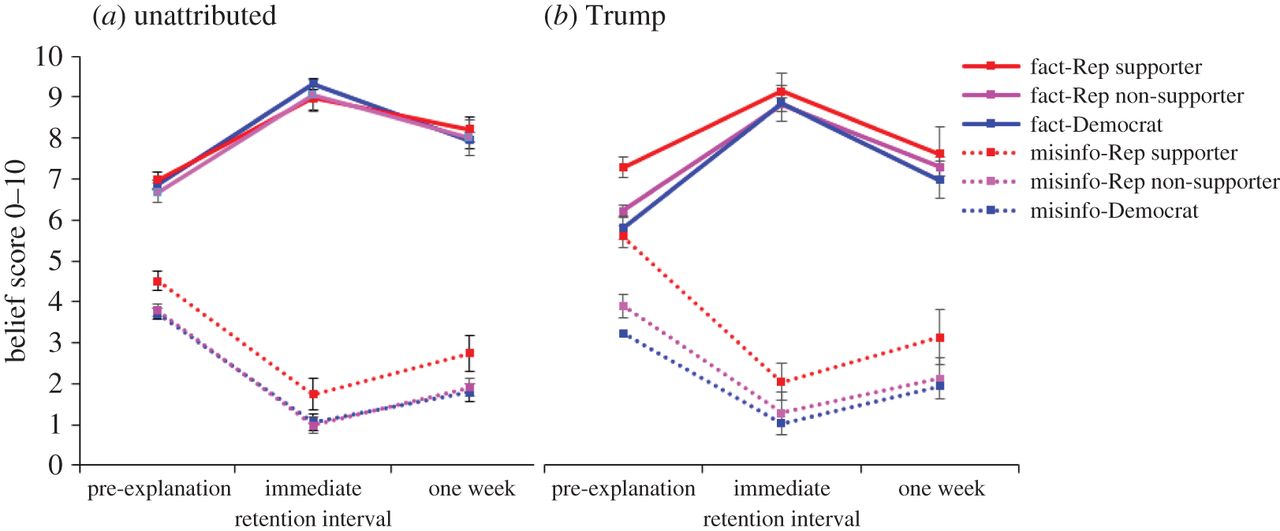

These claims were presented two ways: unattributed or clearly indicating that they were uttered by Trump. Participants were asked to rate whether they believed each of them on a scale of zero to 10.

Related Training: Investigating the Internet: How to Sniff Out Scams

Each falsehood was then corrected (or confirmed) with reference to a nonpartisan source like the Bureau of Labor Statistics. Participants were then asked to rate their belief in that claim again, either immediately or a week later.

The results are clear: Regardless of partisan preference, belief in Trump falsehoods fell after these were corrected (see dotted lines below).

The belief score fell significantly for Trump-supporting Republicans, Republicans favoring other candidates and Democrats. A fact-checked Trump falsehood lost a large portion of its adherents.

Belief in Trump and unattributed misinformation and facts over time, across Trump support groups and source conditions. Rep, Republican; misinfo, misinformation. Dotted lines show misinformation items. Error bars denote 95% confidence intervals.

“It was very encouraging to see the degree of movement, at least in the immediate sense,” said Briony Swire-Thompson, the paper’s lead author and a PhD Candidate at the MIT Department of Political Science.

There’s more good news for fact-lovers in the research. Generally speaking, misinformation items were from the start less believed than factual claims. Moreover, the research found no evidence of a “backfire effect,” i.e. an increase in inaccurate beliefs post-correction. This is coherent with other recent research.

The rub, of course, is that Trump supporters were just as likely to vote for their candidate after being exposed to his inaccuracies. The paper found that voting preferences didn’t vary among Republicans who didn’t support Trump, either. Only Democrats said they were even less likely to vote Trump.

Another interesting finding is worth quoting in full.

If the original information came from Donald Trump, after an explanation participants were less able to accurately label what was fact or fiction in comparison to the unattributed condition, regardless of their support for Trump.

This Trump-induced uncertainty among participants over the factual nature of claims, regardless of whether they are correct or not, resonates with one of the few truly thoughtful pieces about the so-called “post-truth” phenomenon. Written by Jeet Heer of The New Republic, it suggested that Trump’s communication style was an attack on logic as much as on facts. Trump’s end goal, according to Heer, is to create a confusion in which his words at that moment are the only “facts” that matter.

Swire-Thompson and her colleagues also conducted a second experiment that examined whether varying the type of source correcting a falsehood had an influence on the change in beliefs. This was to evaluate whether, for instance, a Republican correction of Trump misinformation would have a greater effect than a Democratic one.

A limited effect was detected here: “Partisanship and Trump support were far better predictors of the extent of belief updating than the explanation source.”

The study joins a growing recent literature on fact-checking. It indicates that fact-checking can change people’s beliefs, with the caveat that partisanship has an adverse effect of the strength of this change. It is novel in shedding some light on how fact-checking affects voting intentions.

There’s plenty more that can be studied in this field, Swire-Thompson said. A similar study could be conducted on a polarizing liberal figure, for instance.

The determinants of voting intentions can also be probed further, Swire-Thompson said. It could be possible that voting intentions change if the relative share of misinformation and factual claims presented to participants was modified (this study exposed participants to an equal number of facts and falsehoods).

The search for factors that really do drive people to support or abandon a candidate is not likely to be simple, Swire Thompson said.

“I think it will be an uphill battle to determine what does change people’s voting intentions,” she said.