Tagging fake news on social media is great, but does that actually change beliefs based on misinformation? According to a new study, the jury’s still out.

A meta-analysis of lab debunking studies published in Psychological Science found that it’s often not enough for fact-checkers to simply correct online misinformation — they also have to create detailed counter-messages and alternative narratives if they want to change their audiences’ minds. And even when fact-checkers do construct detailed counterpoints and alternative narratives, it can be impossible to surmount how people think about certain topics.

“As we know very well, misinformation will persuade,” Dolores Albarracín, professor of psychology at the University of Illinois at Urbana-Champaign and the study’s co-author, told Poynter. “Of course it’s possible to correct misinformation, but that’s not as strong as the misinformation itself.”

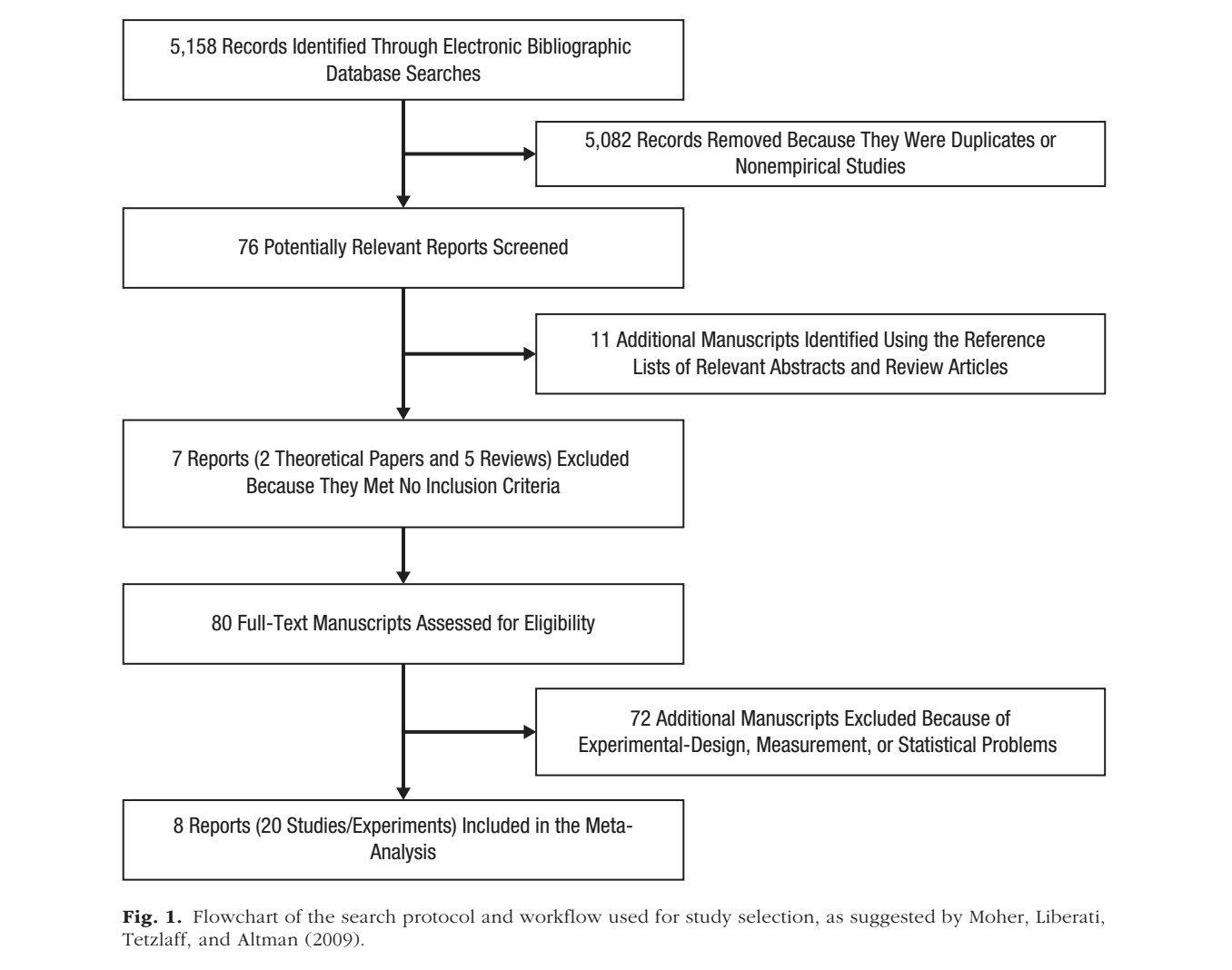

That headline finding of the study, conducted by researchers at the University of Pennsylvania and UIUC, isn’t exactly positive for fact-checkers — but it is comprehensive. The analysis drew upon eight of the 76 reports initially uncovered by a set of paired search query keywords, such as “misinformation OR misbelief,” weeding out irrelevant or duplicated scholarship using three criteria (see chart below). Researchers examined a final selection of 20 experiments from 1994 to 2015 that address fake social and political news accounts in order to determine the most effective ways to combat beliefs based on misinformation.

The study has several implications for fact-checkers, most of which can be boiled down into one idea: be thorough and thoughtful in your corrections.

“The correction is generally not perfect,” Albarracín said, “although it can achieve very high levels and be close to perfect in a lot of cases.”

So if simply labeling things as false is an imperfect practice, what are some of the effective cases that fact-checkers focus their work on? Albarracín said it comes down to audience psychology.

“The general idea is that you need to design the general mental model people form,” she said. “When you hear something that’s persuasive, you form an idea about what’s going on. That’s imprinted in memory. So later on, when you come in to correct an important detail, you need to keep in mind that that model has not gone away — it’s going to be dominant.”

A key way for fact-checking and media organizations to change that model is to supply detailed rebuttals of each point that constitutes a news consumer’s opinion about a piece of misinformation. Albarracín said the most effective way to do that is to bypass the traditional “letter to the editor” approach and engage in meaningful conversations on social media. And that means asking your readers questions about what they think.

“Social media is ideal because it's equal ground and everyone is a participant,” she said. “If you’re asking questions instead of simply stating information, facts, then the audience can begin to build a narrative about what is going on.”

Based on data from the study, that effort may also be audience-specific. Albarracín said people who are generally more thoughtful about their news consumption may require more in-depth fact checks, and vice versa. Don’t overdo it, though — Albarracín said one of the most counterproductive things many fact-checking outlets do is rehash each point of a piece of misinformation.

Her advice: get on to the correction quickly so that readers’ beliefs are straightforwardly corrected instead of subconsciously reinforced.

“The more elements you miss in the initial story that you cannot counterargue, the more difficult it would be for new information to kick in,” she said. “The more you use your correction to rehash the initial story, the closer you are to reinstating that story without guaranteeing people will get to the second half of that writeup.”

Despite a wealth of evidence-based findings from the study, and some helpful extrapolations of the data, Albarracín said there are some notable limitations. First is the lack of helpful advice for fact-checking and media organizations when it comes to improving corrections, given the lack of scholarship about making audiences more active online. Second is the uncertainty around how to help audiences build new narratives about topics covered by fake news in a way that doesn’t directly pander to their beliefs, as well as getting them to trust media they view as inherently untrustworthy. That, Albarracín said, could be the foundation for a future study — and a roadmap for where fact-checkers should go next.

“We don’t have good answers to what exactly can be done from the media point of view to make the audience active,” she said. “We know that’s important, and we know how it’s been done in the lab, but we need experiments looking at how to actually do it.”