Around the world, publishers are wondering whether they will be able to get paid for licensing their news content to companies like OpenAI for use in artificial intelligence systems known as large language models and, if so, how they should think about the value of their news.

They’re also wondering how to avoid the problems they faced with social media outlets that profited from advertising related to news, built audiences on the back of news and then paid out peanuts to publishers. As with the payouts from Google, we can expect AI companies to offer the large outlets low millions, the medium outlets hundreds of thousands and the small outlets, or those in low and middle-income countries, about $20,000 a year.

“We consider that artificial intelligence, which does not compensate the publishers, is the biggest threat ever,” said Marcelo Rech, executive president of Brazil’s National Newspapers Association, in an interview.

The good news is that large companies like OpenAI need quality content, creating an opportunity for publishers to negotiate licensing deals. OpenAI is in a hurry and nailing down (nonexclusive) agreements with large, reputable news outlets in the United States and Europe while postponing talks with small outlets and outlets in the rest of the world. Reached by email, Open AI declined to comment.

“The agreements being made now are setting the table for the mechanisms and metrics for later deals to be made with local outlets,” said David Clinch, media revenue consultant and co-founder of Media Growth Partners, who is familiar with such discussions.

Some are warning that deals between big outlets will only serve to create oligopolies in the field, squeezing out the open-source language models and smaller competitors. Unsurprisingly, the large U.S. companies are calling for regulation and guardrails, but European regulators and smaller firms are worried that this will simply freeze out competition.

“We are witnessing a movement towards regulatory capture,” said Henri Verdier, France’s ambassador for cyber and digital affairs. “The movement has two aims: to give a competitive edge to the existing players and to tell us what problems we should be concerned about (such as the famous ‘existential risk’) while forgetting other, very topical, problems like the privatization of the public domain, theft of intellectual property, threats to privacy.”

We spoke to experts in the world of journalism and tech to understand what is different this time and dig into these questions of valuing news.

Does a model need more than one source? Or is it enough to just have the BBC or Associated Press?

Even if OpenAI (or another large company) strikes deals with the big outlets in each market, it will still need to make arrangements with other outlets. That’s because generative AI predicts text effectively only when it also operates as a super-powered search engine in real time. Prediction is only effective with a large enough input sample. Relying on old information is not enough.

“Theoretically, an LLM needs only one source. But in a perfect world, you need more than one source,” said Clinch. “You want enough of a sample to recognize that there may be something new or relevant. Some (prompt) answers may come from a source in California or India. If your answer is only based on one story then you probably reduce your ability to provide truly contextualized answers.”

Even if an AI company decides to make deals with multiple sources, there’s bad news. OpenAI and other firms are in startup mode and booking losses. They will likely use this as an excuse to pay less money to publishers. Plus there is a lack of transparency: Publishers don’t know what of their content large language model developers have already hoovered up — it is a complete mystery what content was used to train models.

“It’s like they entered the house, took all the furniture and all the jewels and all the goods and then they say, ‘Oh, we didn’t know there was a door! Now we won’t enter anymore,’” Rech said.

Many of the deals being done (as with Google and Facebook previously) involve some cash changing hands but also credits toward using tools. Publishers are afraid they will become dependent on the technology and then financially exploited later. They look to the lessons of years of dealing with Facebook and worry about whether large language models will use publisher content to build news aggregation services and then trap them as the price for their content drops.

To deal with the lack of transparency and “ensure a fair value sharing between tech companies and news publishers”, the French Conseil National du Numérique is proposing a third-party platform where data about large language models can be stored to “understand and evaluate the relative importance of information, its quality and variety, develop tools, establish metrics.”

Publishers know how to rank content

Publishers argue that scraping content — when information is pulled into large language models from publisher websites — is not a good way to get quality information into such models.

In guidance to its members issued in September 2023, Brazil’s National Newspaper Association wrote, “Most likely, all journalistic content available digitally around the world has already been ‘digested’ for training (generative artificial intelligence) models. As we know, such models can point to additional sources of query but do not identify the origin of the information in their responses.” But publishers say that staying up-to-date is essential for such models and that is why AI companies will need news on an ongoing basis. That means deals can not be “one and done” but rather ongoing licensing agreements. The technical term is “retrieval of augmented generation.”

“The value is not so much the training data but who is (the model) pinging for contextual information? As an industry, we’ve not made as much of this as we should have,” said one media/tech strategy expert involved who asked not to be named due to ongoing negotiations.

Publishers also argue that they know how to categorize and flag valuable news/information — they’ve been doing it for years as part of how they optimize for advertising and subscription revenue; for example, putting premium content behind a paywall.

“Publishers have a great and valuable protocol for valuing content,” Clinch said.

Syndication and licensing

Others say they want their content to be treated as syndicated content, meaning not buried in a huge language model but “with guardrails around how it is presented.” Attribution, prominence and context are all important. “How do I know you are not mixing my content with disreputable sources or just taking the bit from some right-wing extremist who I interviewed?” asked another media figure involved in these conversations.

What is valuable?

Reliable content: Journalists will be happy to know that accurate and unique content is still valuable. When journalism professionalized (beginning in the 1920s in the United States, according to Columbia Journalism School’s Michael Schudson) part of the reason was that in a world of partisan, unreliable information, credibility was a market niche. So too for large language models. They will only work well if they have reliable information. If an model trains on itself, then it will quickly degrade because it will feed off its own poor-quality information. “Machine training on machine content will degrade its performance,” said one technology director at major university.

Unique content: As well as being reliable, the information put into large language models should be unique. “From the model context, The more unique information it gets, the better because then it’s able to return specialized information like being able to answer a question on local news or a question about science,” said one senior tech company employee.

This is potentially disadvantageous for smaller outlets that don’t have a lot of original content. After years of being starved for funds, many smaller outlets rely on wire service stories. Strip that out and there is not much left to sell to generative AI companies.

Contextualized information: Successful AI will be able to search for information and also provide context to that information. To take one example: It’s not just about finding the bus schedule and providing it to a user. It’s also about retrieving context, such as whether there was a snowstorm and the local mayor has warned people in town that the 3 p.m. bus service might be canceled.

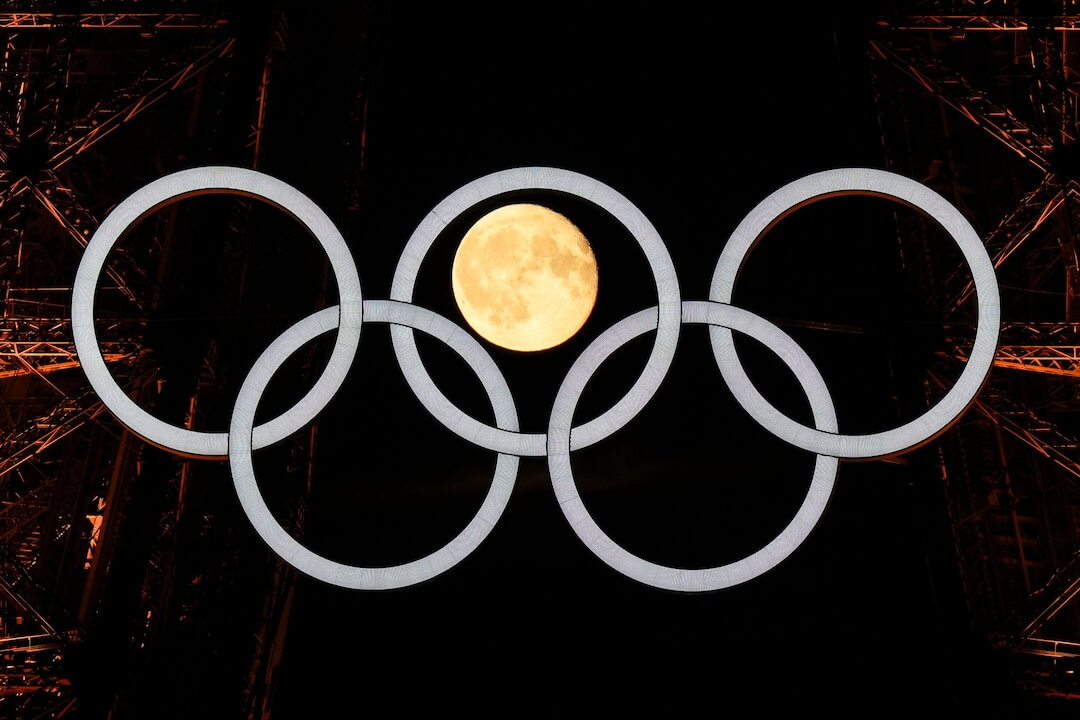

Images and video: Publishers with original images and video will be more valuable if their work can be authenticated and its provenance made known. Alliances like the Content Authenticity Initiative and firms like Truepic believe that government mandates for some sort of watermarking requirement are only a matter of time. Even if governments don’t pass laws, it will just take one big company to go ahead with watermarking and then others will follow.

It’s a bit harder to make these kinds of watermarks for video because they have more data than images, but Clinch pointed to the content ID used by YouTube for matching rights-owned videos with newly uploaded videos. Optimists hope that once the content is clearly marked, more money will follow from advertising as well as licensing.

Historical archives: These are used for training models, and so access to them can be sold by the publishers who have them, which are mostly the larger and older publishers. Also valuable is current content that comes with journalists who can answer questions about the reporting process and how they got the news.

The ability to get more content as and when it’s needed: The licensing arrangements will likely include the possibility of getting more news when it’s needed. It’s a bit like a stock option. Normally a language model might not need news about what is happening in a small town somewhere or a particular topic. But if some news breaks, then it will suddenly need lots of news about that subject.

“Whenever a fresh journalistic investigation is warranted, the cost of producing new information should be embedded in these contracts. That presents an interesting opportunity for small local outlets to form a coalition so that, when there is demand for a new local story, there will be a greater chance of news coming from a publisher in the coalition,” said Dr. Haaris Mateen, assistant professor at the University of Houston.

Writing quality: Writing quality still matters, but it might not matter as much later. This depends, in part, on what happens with prompt optimization. Just as news outlets began optimizing for search, so too they may start optimizing for AI prompts.

Non-English languages such as Spanish and Portuguese: They are more niche and difficult to substitute. If a story in Spanish or Japanese is about an event in that country, it is much more likely to have been produced for an outlet in that country. Publishers in countries where fewer people speak their language will have a harder time as there is less need for their work. In Iceland, the government decided the best option is to give OpenAI lots of Icelandic content so it can be used in their models to make them more accurate and better quality. The government allocated 4 billion Icelandic kronor and the Miðeindlanguage company has been creating spell checks and grammar checks in Icelandic. ‘In many low-resource language communities, people realize and accept that our languages will not be adequately represented in cutting-edge language models if we are not willing to give up our data for their training. Many are of the opinion that language preservation must trump copyright considerations,” said Linda Heimisdóttir, CEO of Miðeind.

A publisher-led option

Another approach is for news organizations to create their own large language models.

“I don’t think we owe it to big tech to make their models better,” said one U.S. news executive. She explained that large language models are starting to sound like a “happy intern” — when their terms of service forbid discussion of topics such as the trafficking of minors, they can’t even retrieve much of the content produced by quality sites.

“There is a lot of ugly news in the world and the tone of the LLM is increasingly problematic,” she said. “Ultimately, many news organizations will each build their own LLM.”