When asked during a SXSW panel what Americans should worry about ahead of this year’s presidential election, CNN correspondent Donie O’Sullivan replied: “Everything.”

The discussion about truth, deepfakes — and of course, the Kate Middleton photo — revealed he was only half-joking. O’Sullivan, who covers misinformation, joined Washington Post deputy director of photography Sandra Stevenson, CNN director of photography Bernadette Tuazon and CNN editorial director David Allan in front of hundreds in Austin, Texas.

“Here’s a picture. I’m a mom with two kids. I work two jobs. I live in Michigan, a state that can go either way,” Tuazon said. “I hear some rumors about fighting at my precinct, or violence at my precinct. Would I go vote? I don’t think so.”

Those rumors could come in the form of images created with generative artificial intelligence, or audio deepfakes that have already seeped into state primaries. During the 2020 election, misleading images of alleged voter fraud were among the flurry of misinformation that partly drove the Jan. 6 attack on the U.S. Capitol.

“That was sort of the basis of the big election lie,” O’Sullivan explained in front of a collage of AI-generated images, one of which falsely depicted voter fraud.

Although companies like OpenAI and Anthropic have put guardrails in place to prevent election disinformation from being created, he isn’t convinced it’s enough.

“It’s one thing to have policies,” O’Sullivan said. “It’s another thing to actually act on them.”

The Post is “constantly” having conversations about how to identify AI-generated images, Stevenson said, and CNN has an internal training program.

But, AI might not be the biggest threat ahead of the election.

When the infamous Access Hollywood tape dropped before the 2016 election, then-candidate Donald Trump was forced to do something he rarely does: apologize. That might not happen today, O’Sullivan said, since one can convincingly — falsely — argue audio or video is a deepfake.

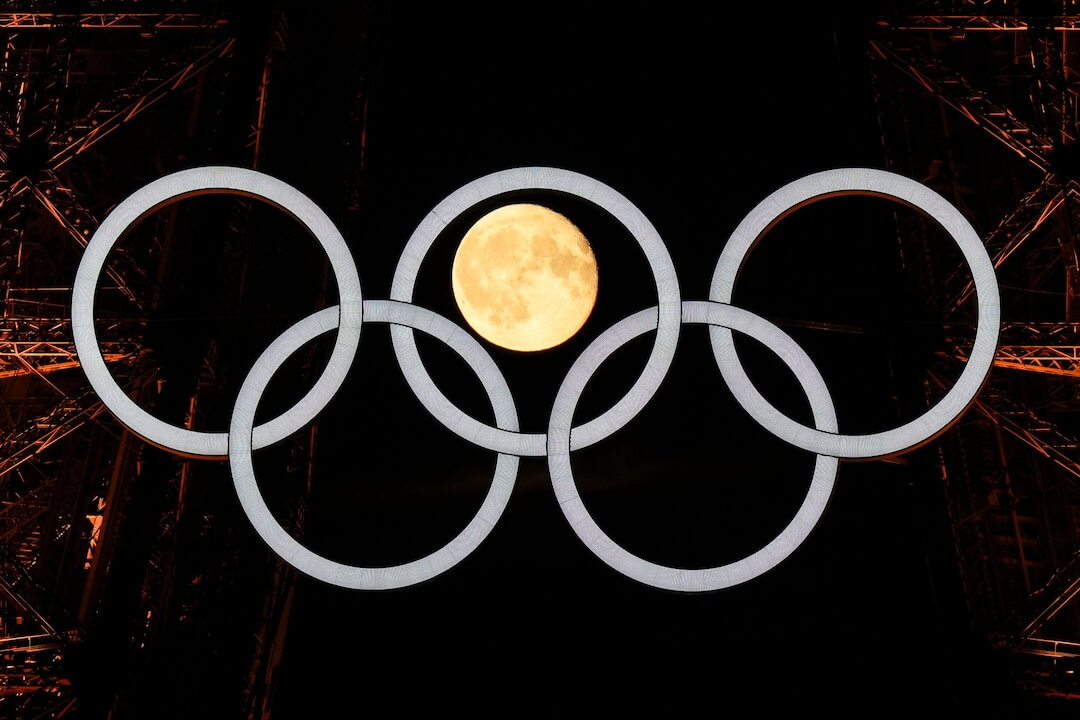

Indeed, the public is now so aware of generative AI’s capabilities, that many thought the recent fiasco surrounding a manipulated image of Kate Middleton, the Princess of Wales, shared by the royal family was the work of AI.

The event was encouraging from a media literacy standpoint since so many users online rushed to debunk it themselves. But it was also a reminder that user-generated content — even from official sources — needs to be harshly scrutinized in the age of AI during an election year.

The panel offered a look behind the scenes at the Post and CNN as the photo of Kate and her kids circulated on Sunday. Both outlets ran the image on their homepages, but The Associated Press issued a “kill notification” later that day when people started to notice irregularities in the photo.

When Tuazon got a call from a CNN staffer in London at around 6 p.m. — which is close to midnight in the U.K. — she thought someone died. “(My colleague) said, ‘No, it’s much worse’.”

Tuazon had looked at the image on her phone and didn’t notice any glaring issues before it ran. But, once the kill notification came in, she started investigating the image, which included circling odd parts of the photo — like a misplaced zipper and misaligned sleeve.

While reaching out to other photo editors at other outlets, Tuazon was disappointed to hear things like, “Talk to PR,” or, “You’re not going to quote me on this, are you?”

“I think that was irresponsible,” she said. “We work for information, this is not competition.”

CNN decided to leave the original photo up in its first article with an editor’s note, which linked to a piece about the altered image. The Post pulled the photo, but also wrote a follow-up piece explaining how it happened.

Stevenson said it was an opportunity to correct the record and educate the audience about third-party photos.

“I think this tells maybe more a story about journalism and modern journalism than it does the royal family,” O’Sullivan said. “We’ve ceded so much of the content that we reshare and rely on to the newsmakers themselves.”

Correction: A previous version of this article misspelled Donie O’Sullivan’s name.