SEOUL, South Korea — Twitter’s attempt to crowdsource fact-checking with Community Notes written by Twitter users has mostly failed, the Poynter Institute’s MediaWise Director Alex Mahadevan, told a group of fact-checkers on June 30.

Community Notes, once known as Birdwatch, is a Twitter program that allows certain users to submit helpful context to tweets that might be misleading or missing important information.

In November, new Twitter owner Elon Musk tweeted that the program “has incredible potential for improving information accuracy on Twitter.” Mahadevan said the program has been launched in more than 15 countries, and there are nearly 133,000 Community Notes users.

In practice, however, the experiment has been lackluster, said Mahadevan of MediaWise, a social-first media literacy program.

Twitter users have written roughly 122,000 notes over the course of the program, but not all of those notes are shown publicly. Regular Twitter users can only see around 10,400, or about 8.5%, Mahadevan said, speaking to an audience in Seoul, South Korea, at GlobalFact10, the world’s largest fact-checking summit. It is hosted by the International Fact-Checking Network at the Poynter Institute and Korea’s SNUFactCheck.

MORE FROM POYNTER: Banning Donald Trump and meeting Elon Musk: Former Twitter safety chief gives inside account

To determine which Community Notes see the light of day, Twitter relies on ratings from other Community Notes users, who can read notes and evaluate whether those notes cite high quality sources, are easy to understand, provide important context and more. If notes get enough ratings stating that they are helpful, they are more likely to be displayed publicly, Mahadevan explained.

That’s not the only requirement for a note to become public, however.

The program is severely hampered by the fact that for a Community Note to be public, it has to be generally accepted by a consensus of people from all across the political spectrum.

“It has to have ideological consensus,” he said. “That means people on the left and people on the right have to agree that that note must be appended to that tweet.”

Essentially, it requires a “cross-ideological agreement on truth,” and in an increasingly partisan environment, achieving that consensus is almost impossible, he said.

Another complicating factor is the fact that a Twitter algorithm is looking at a user’s past behavior to determine their political leanings, Mahadevan said. Twitter waits until a similar number of people on the political right and left have agreed to attach a public Community Note to a tweet.

“Maybe that would have worked four years ago,” he said. “That does not work anymore because 100 people on the left and 100 people on the right are not going to agree that vaccines are effective.”

Despite the limitations, research produced by Twitter suggested that when Community Notes with cross-ideological agreement were made publicly available, they helped slow the spread of misinformation. People who saw the notes were “significantly less likely to reshare social media posts than those who did not see the annotations,” Twitter reported.

The problem is that regular Twitter users might never see that note. Sixty percent of the most-rated notes are not public, meaning the Community Notes on “the tweets that most need a Community Note” aren’t public, Mahadevan said.

MORE FROM POYNTER: Elon Musk keeps Birdwatch alive — under a new name

“So this algorithm that was supposed to solve the problem of biased fact-checkers basically means there is no fact-checking,” he said. “So crowd-sourced fact-checking in the style the Community Notes wants to do (is) essentially non-existent.”

Yoel Roth, the former head of trust and safety at Twitter, lamented the weaknesses of Community Notes at GlobalFact10. Previously, he said Twitter had a two-pronged approach to addressing misinformation — one method that was centralized, where the company applied labels to misinformation, and another community-sourced method.

Now, it has put “all of its eggs in the basket of Birdwatch,” Roth said.

“I think that’s a failure,” he said. “I think Community Notes is an interesting concept. I think it has some areas where it’s successful. I think we’re seeing some interesting applications of the product. But there’s many other areas where it is not a robust solution for harm mitigation.”

On some occasions, Community Notes that have been shown publicly have also been flawed, Mahadevan said.

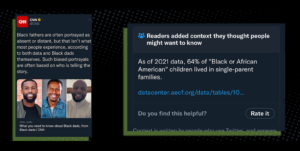

He cited one example of a Community Note that was attached to a CNN tweet. CNN had written: “Black fathers are often portrayed as absent or distant, but that isn’t what most people experience, according to both data and Black dads themselves. Such biased portrayals are often based on who is telling the story.”

Example of a Community Note that used outdated information to respond to a CNN tweet. It was eventually removed.

Twitter users added a note that said, “As of 2021 data, 64% of ‘Black or African American’ children lived in single-parent families,” and cited outdated data.

Mahadevan said that Community Note, which added no substance and included misleading data, was publicly added to a CNN tweet.

“It was a racist note based on faulty data,” he said. “Millions of people saw this.”

The note was taken down after people downvoted it, but it was still up for several days.

Despite the limited number of notes that are publicly available, Mahadevan said the program has some value for fact-checkers.

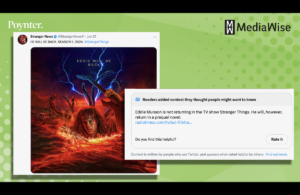

“There is hope,” he said with a laugh. “Community Notes is really, really good at flagging and appending context fact-checks to non-political things. To pop culture. To maybe not-as-harmful mis- and dis- information — because nobody disagrees about it.”

Community Notes are more often successfully added to low stakes, sometimes funny or satirical tweets, Mahadevan said. While the most harmful misinformation is left to professional fact-checkers, Community Notes might be able to fill the gap for lower-level misinformation, he said.

Twitter Community Notes have generally been more successful outside of political topics, such as in the world of pop culture.

The notes are also good at catching and identifying AI-generated content and scams, Mahadevan added.

“These Community Notes people are very, extremely online, so they’re actually seeing a lot of the AI stuff on Reddit or other areas, so they’re able to pick up the AI-generated stuff,” and frequently do so more quickly than fact-checkers, he said.

Many harmful products that are advertised on Twitter are being flagged with Community Notes because “everyone hates scams,” Mahadevan said.

Data from the Community Notes program can also be valuable to fact-checkers, Mahadevan said.

Twitter provides a variety of data including the notes submitted; the way those notes are rated and notes’ status history, including when it was rated helpful and shown publicly or when it was rated unhelpful and hidden. Mahadevan said this is kept up to date roughly each day.

Using this data, he said fact-checkers can write stories answering questions such as:

- What do people consider trusted sources?

- How many views do notes have compared to the views of the original flagged tweets?

- Who are the most prolific Community Notes users?

- How many misleading tweets are deleted after a Community Note?

One challenge is the data isn’t country-specific, but you can sort notes by language, Mahadevan said.

If fact-checkers join the Community Notes program, they can also see the notes that have been submitted, Mahadevan said.

“While they may never see the light of day, you can write a fact-check about them,” he said. “It’s a great place to look for claims that you might not have seen before.”