The Week in Fact-Checking is a newsletter about fact-checking and accountability journalism, from Poynter's International Fact-Checking Network & the American Press Institute's Accountability Project. Sign up here.

Meet the researchers working to solve misinformation

Over the past year, interest in misinformation research has ballooned. In order to highlight some of the people working behind the scenes, Poynter’s Daniel Funke profiled a few researchers whose work has changed Facebook’s fact-checking program, been cited in countless pieces on fake news and is developing solutions for debunking deepfake videos.

The article is part two in a three-part series from Poynter on the people behind the misinformation phenomenon. Part one profiled some of the students who are working on misinformation-related projects around the world, while part three will focus on some infamous fake news writers. Have someone you think we should know about? Email factchecknet@poynter.org.

This is how we do it

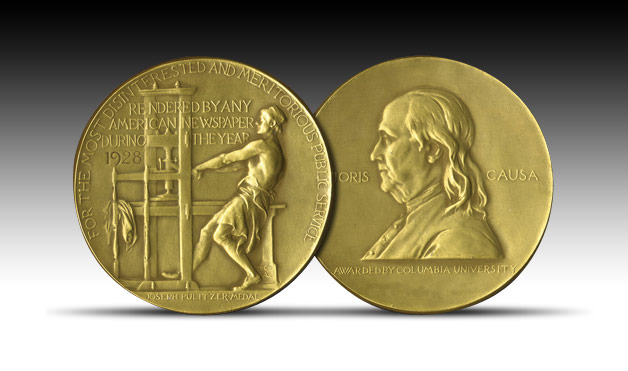

- Here’s how attention to detail and fact-checking helped this newsroom get a Pulitzer.

- A French journalist has visited 81 schools armed with a video designed to teach kids about the dangers of conspiracy theories. From NPR’s “Pick a Number” series.

- Whose job is it to teach people real journalism from fake journalism? A new report from the American Press Institute has an answer: Journalists.

Research you can use

- This working paper found that delusional people and fundamentalists are more prone to falling for fake news.

- Did you know that bullshitting has an academic definition? Here’s a study on the social situations that make people bullshitters.

- Artificial intelligence isn’t always the answer to fighting misinformation — sometimes it’s the problem, says an AI researcher in The Conversation.

This is bad

- A story headlined “When a stranger takes your face” in The Washington Post details Facebook’s “failed crackdown” on fake accounts. In related(ish) news, Welsh police facial recognition technology wrongly identified more than 2,000 as potential criminals.

- Introducing the fake reporter: The person you hire when real reporters won’t report your “truth.”

- A state legislator in Maine said she was “shaken” to learn of her own death from a sketchy Facebook page that looks deceptively like an official police department page. The page remains on Facebook, despite complaints.

This is fun

- On the Late Show, Stephen Colbert explains why he’s doubtful that President Trump’s lawyer Rudy Giuliani “will get his facts straight.”

- NPR’s “Ask Me Another” show tests two guests on their ability to spot fake news.

- PolitiFact did a Reddit AMA on Tuesday.

A closer look

- PBS reporter Elizabeth Flock spends a week with Russian propaganda media, and finds that dezinformatsiya can really mess with your head.

- Bots and trolls are becoming a nuisance in the run-up to the Mexican election, The New York Times reports.

- In an effort to thwart conspiracy theories and misinformation, The Globe and Mail will partner with ProPublica to monitor political advertisements during the Canadian elections; and Facebook is blocking foreign ads during the Irish elections.

If you read one more thing

Amazon has a fake review problem, BuzzFeed News reports. (But really, who doesn’t?)

15 quick fact-checking links

- This is a contender for correction of the week.

- An Internet hoax about the Parkland school shootings became real for this New York woman.

- A pro-EU disinformation project wrote about a pro-Kremlin copycat of a Swedish fact-checking outlet that recently launched by copying a similar website in Norway. Say that 10 times fast.

- Does the fake news industry weaponize women?

- Fake news has infiltrated the world of Russian fashion stars, and parody Twitter accounts in India are attacking just about everyone.

- Newsy and PolitiFact team up for a fact-checking TV show.

- Government officials in Russia are worried about fake news, too, you know.

- Two books for you: An excerpt from “After the Fact: The Erosion of Truth and the Inevitable Rise of Donald Trump;” and a review of the audiobook “A Field Guide to Lies: Critical Thinking in the Information Age.”

- Not all filter bubbles are bad and we need to stop only blaming them for our mass misinformation problem, says a professor at the University of North Carolina.

- This Turkish fact-checker Teyit launched a dashboard where readers can see which claims they’re checking in real time.

- Conspiracy theories now have their own emojis on the right-wing internet.

- British fact-checker Full Fact is asking readers to submit feedback on its fact-checking process.

- From International Fact-Checking Day, here’s a tip sheet with 10 ways to verify viral social media videos.

- The Belgian government set up a Reddit-style public consultation on solutions to disinformation.

- A new family-style board game is designed to teach Swedes how to fact check.

Until next week,